Since 2022, we have been thrust into relationships with chatbots via companies like ChatGPT (OpenAI), Gemini (Google), Claude (Anthropic), Copilot (Microsoft) and Grok (xAI). And while chatbots are already a very popular use of generative AI, we are told by company spokespeople — the Oligarchs — to brace ourselves for AI’s true potential (sentience!) and its much bigger impact on the world (sex robots?), once their data centres are fully built and plugged into endless energy sources.

Years in, some of us are growing more than a little bit tired of the industry’s self-aggrandising promotional discourse. A lot of AI has already funnelled itself into the most predictable of markets, like autonomous weapons, surveillance, dynamic advertising, and deepfake porn. So, on the one hand, as critical scholars, we see clearly that the end of AI is already here, in terms of providing societal benefits worthy of the infrastructural investments being made, while on the other hand, we note that the hugely destructive and deceptive deployment of generative AI nevertheless continues to inflate the world’s economy in typical ways, but at scales never before seen and stakes never before imagined. It is therefore not so much that AI has no potential for good, but rather that it is hard to buy into anything that has to sell itself so hard (and is so far making life worse).

There are a lot of people who use general-purpose generative AI — be it as a search engine that provides easy summaries, or for transcription purposes, for research, to generate synthetic homework, for “vibe coding”, as a stand-in for friend, therapist or doctor… or to make “decline porn”,1 or profile protesters, or surveil workers. Whatever the case — misdirected or outright violent — there’s no denying that LLM-based chatbots are in use and that humans are generally compelled by the format of privately texting with an all-knowing bot.2 We are told to think of these conversations as tapping into the collective consciousness of all past knowledge — a repository of everything ever recorded. As Anthropic CEO Dario Amodei sells it, “every AI cluster will have the brainpower of 50 million Nobel Prize winners.”3

As widely noted, however, the chatbot’s most notable feature is not its “brainpower” but rather how desperately sycophantic it is by default. This means that it feels like you are interacting with a chatbot that really “gets you” and makes you feel good and understood.4 Chatbots are programmed to never truly challenge the prompter’s ideas, to such a degree that it can cause people to depart from consensus reality.5 This has been referred to as “AI psychosis” — the feeling that you are better understood by the chatbot than by people, or by the belief that “AI” is more objective and neutral than experts, journalists, or your neighbours. The feeling of AI psychosis is also being convinced that you are, in fact, superior to others; an unrecognised genius, or special in some ways that people around you simply don’t recognise, but that the chatbot does. This is what happens when you sell AI as god-like — people might actually end up believing that they are having spiritual awakenings.

Psychosis might feel like too serious a diagnosis to attach to the use of something virtual like AI chatbots, even though the consequences are, in fact, often rather serious — especially when they lead to suicide, or to harming others.6 But, such a framing is also a way of (dis)placing the phenomena onto individual users, as having personal mental health issues. This is important to consider because one of the biggest shifts online in the past two decades has been towards profile-driven identities on social media, where “influencers” (loosely defined) are perceived to build themselves as brands, as entrepreneurs of their own marketable lives. This seems especially important here in the context of the manosphere, the online realm where men attempt to understand masculinity, often in reaction to feminism. The manosphere has promoted a subculture that mimics the algorithm by counting and measuring everything — from the space between pupils to precise “macros” count to weighted protein intake to the number of followers on TikTok. It measures, categorises, and ranks everything and everyone, and forces a comparison. It forces categories and rates unmeasurable things by standards entirely made up by the subculture, but presented as value-neutral or, in some undisclosed way, scientific. Arguably, much of the logic of generative AI shares in this manosphere logic, which is to blur the manufacturing of objectivity and fabrication of neutrality.

For the Oligarchs, owners of the data centres that hold and host social media and generative AI alike, this has been an opportunity to inculcate in users the idea of AI chatbots as an extension of social media, whereby you, the individual user, are at the centre of everything. Part of the long arc of the Oligarchs’ political project has been exactly this: to individuate — to make your relationship be with the platform itself; these days, via an unwitting stream-of-consciousness testimonial that the user engages in with a chatbot. This means that the chatbot exchange that seems so private and intimate, between you and an all-knowing bot, is actually you in conversation with the tech company providing the service, who dutifully maintain logs and records of everything you share on their platforms. Users become complicit in their own surveillance: AI as panopticon, a work of architecture “that allows a watchman to observe occupants without the occupants knowing whether or not they are being watched.”7

The Oligarchs have long primed users for this iteration of AI by way of social media conditioning. AI could have been many things, but its manifestation — as chatbots and platforms that construct informational totalities — grows from having users build personal accounts, drive engagement from them, and lock into online worlds. Online, we are shaped by algorithms and become the Oligarchs’ messengers.8 This is all perhaps best understood as a collection of undoings. When Cory Doctorow talks about enshittification, this is in part what he is describing: first, the platform is good for users, luring them in with promises of social connection and networking; eventually, it abandons users for businesses, which is why everything eventually gets taken over by advertisements. Ultimately, however, the platform is remade to exploit both businesses and users in order to profit only its owners, like Google, Meta and Amazon.9 This is true for profits, as Doctorow shows, but also for the political interests of their owners, beyond direct economic gains, as an extension of the manosphere.

The internet has slowly been transformed over the decades, so its effects set new baselines for expectations every few years. Today, the “reality” you might have your strongest tether to is an illusory entrenchment in the online world — the “offline” being secondary. And for those suffering from AI psychosis, this secondary reality, of being offline, becomes hugely dissonant with what they have been compelled to feel and believe from company algorithms. Social media and AI chatbots alike are there only to serve the platforms through which they operate; as Abeba Birhane (2025) argues, “we should shed the idea that ‘AI’ is a technological artefact with political features and recognise it as a political artefact through and through.”10

The Oligarchs know that shocking and sensationalist online content works best for their bottom line by keeping everyone disturbed and entranced.11 This is why AI is an easy thing to integrate into the present moment: we have been primed for decades by social media to be distracted and then entertained in quick succession, endlessly. Commercial content moderators sanitise the internet for the average user, so what is left is another layer of psychosocial detritus lodged into the system by companies through black-boxed algorithms, “clippers”, and other types of content-promoting tactics.12 These tactics have explicit political aims to shape and manipulate users by not only deeply influencing their beliefs, but also convincing them that those beliefs are their own. This happens in part by marketing social media as more legitimate, free and authentic than other types of media or institutional knowledge — framing it as “real” people, autodidacts, and contrarians fighting against the establishment. But what is happening is that users — and influencers especially — are unwittingly working for the Oligarchs as entertainers without being able to articulate their own political positions because engagement itself has become the dominant (or only) ideology, reducing the entirety of their worldview to being watched and followed online.

By 2026, users interact with the content of mostly strangers — human and bots — and advertisers, deemed to be part of a refined and individualised algorithm.13 Users often think that they are training their algorithm to show them the types of content they enjoy, but fail to recognise that this process is highly extractive of them — that the platforms are, in fact, training and habituating them. As José Marichal (2025) puts it, “platforms encourage us to produce opinions and content experiences, but algorithms encourage us to classify ourselves through our pursuit of interests by giving us more of what we previously asked for”.14 This kind of turn (and turnover) to algorithmic logics has also meant that content itself performs to a proprietary formula — influencers, for example, know to post in ways that are counted and captured by the metrics that drive their content. To be counted is to be made relevant, to have shares and likes and to keep people on your profile to show your popularity. All value comes from what is counted — all of it a measure of something that translates to profit.15 Growing one’s audience is somewhat agnostic to the audience’s appreciation of the content, however; this is foremost a “click and ragebait industrial complex”.16 Some describe this as giving way to a “dead internet” where “many of the accounts that engage with such content also appear to be managed by artificial intelligence agents […]” which “creates a vicious cycle of artificial engagement, one that has no clear agenda and no longer involves humans at all.”17 From this perspective, the internet is already a wasteland of fake content and interactions, fueled by advertisements for often fraudulent products and distorted political commentary. And this problem is now irreversible because AI slop is embedded into everything online forevermore. There is no disentangling AI-generated content from the rest.

But, put like that, who and what does all of this serve? Who or what would want this?

To answer these questions, it helps to think of AI as having a logic that shapes everything it stands for. The logic is that all its value is extruded and extrapolated from what is quantified. To quantify everything, even things that are not quantifiable. To commodify and codify. So, what we are being sold through all this AI hype is that everything should be reducible to categories and hierarchies, put in the form of measures and statistics for prediction. However, if you understand that counting, measuring and predicting are always situated, and therefore subjective and political, you might not be as excited about such AI-determined futures. You may, in fact, be baffled by the premise of the sales pitch. The bigger AI project asks that you abandon meaning and feeling, to believe in a neutral, objective, all-knowing source built from massively large datasets hosted in hyperscale data centres. AI asks that you buy into the idea that more data means being closer to The Truth. It means that you should want to offload your limited cognition to the super machine. And perhaps most dangerously — that you understand all of this as a scientific endeavour.

These are the logics that critical scholars have been working against for a very long time, showing how socially constructed the very notions of objectivity and calculability are, and how not everything can (or should) be measured using scientific methods. The rage against DEI and the humanities is part of the ongoing project of building up the logics of AI and metrics as the great authority — the same logics that enable the surveillance and control of workers by tracking their movements, reinvent phrenology by measuring faces and bodies as data points, and dismiss anything that can’t prove its worth in graphs and charts.

This is why white supremacy, transphobia, and incel culture, alongside anti-intellectualism, anti-scientific authority, anti-expertise thinking, and being against higher education, and the arts and humanities more generally, have been required as groundwork for AI — and why it can be understood as a fascist project.18 In other words, it is not a mere coincidence that AI is entering the world now, as it has. Fascism is the ideological choice that tech CEOs make and help shape because it makes them richer, of course, but also because it allows them to manipulate and observe the world at a distance — like a model or a simulation — to see how things play out. It is perhaps best thought of as a dark kink of the Oligarchs — so bored and empty that they shake up the stock market to make themselves feel something… Clearly, it’s worth analysing their motives as political and psychological, because the problems do not necessarily lie with computers, or even with AI, but squarely with them.

This also explains why there is a particular segment of users of social media and AI that constitute tech bro culture (EA-crypto-looksmaxxing and incel-raw milk adjacent) that are driven to defend the rich and powerful against any kind of criticism.19 The Oligarchs are surrounded by sycophants and lack the ability to meet criticism or even engage with different perspectives. Demographically, the urge to defend these men is a reflex of mostly young white men — this is how the manosphere remains so powerful. Social media platforms have, for decades, been grooming young white men to embrace the message core to tech bro culture: that societal progress is a product of technological advancement, that tech CEOs are the smartest people on the planet, and, more importantly, to also see themselves as future bosses rather than the much more likely scenario of remaining precarious tech workers (if employed at all).

This has meant more than adopting the idea that technology determines the future; it has also meant actively dismissing the social and political aspects of that same future. One way they have done this is by linking specific demographics to particular kinds of content and offloading all responsibility for users’ engagement with platforms. There is very little that platforms are legally accountable for. They perpetuate the idea that responsibility is personal rather than addressing governance, policy, or systemic oppression. They create a structural inability to understand humanity as a collective in favour of the self-made individual framing — rhetoric that stems from tech CEOs, the manosphere, wellness influencers, but also universities that have long pushed for everything to be “entrepreneurialism” as they have abandoned the mission of teaching for just futures — in favour of teaching for an imaginary future workforce for extractive or military industries that further entrench these nihilistic-political beliefs.

We need only to look at the political right’s coordinated fight against critical race theory, gender studies, and the violence perpetuated against trans people to see the threat they pose to a tech-forward narrative. They are a threat because they challenge the foundations of universality and objectivity required for the generative AI industry to be perceived as unbiased and separate from politics and the idea that everything can be measured, controlled, and predicted. And, most importantly, that the Oligarchs — the “master race” at the top of the food chain — are the best positioned to take on this project and determine next steps.

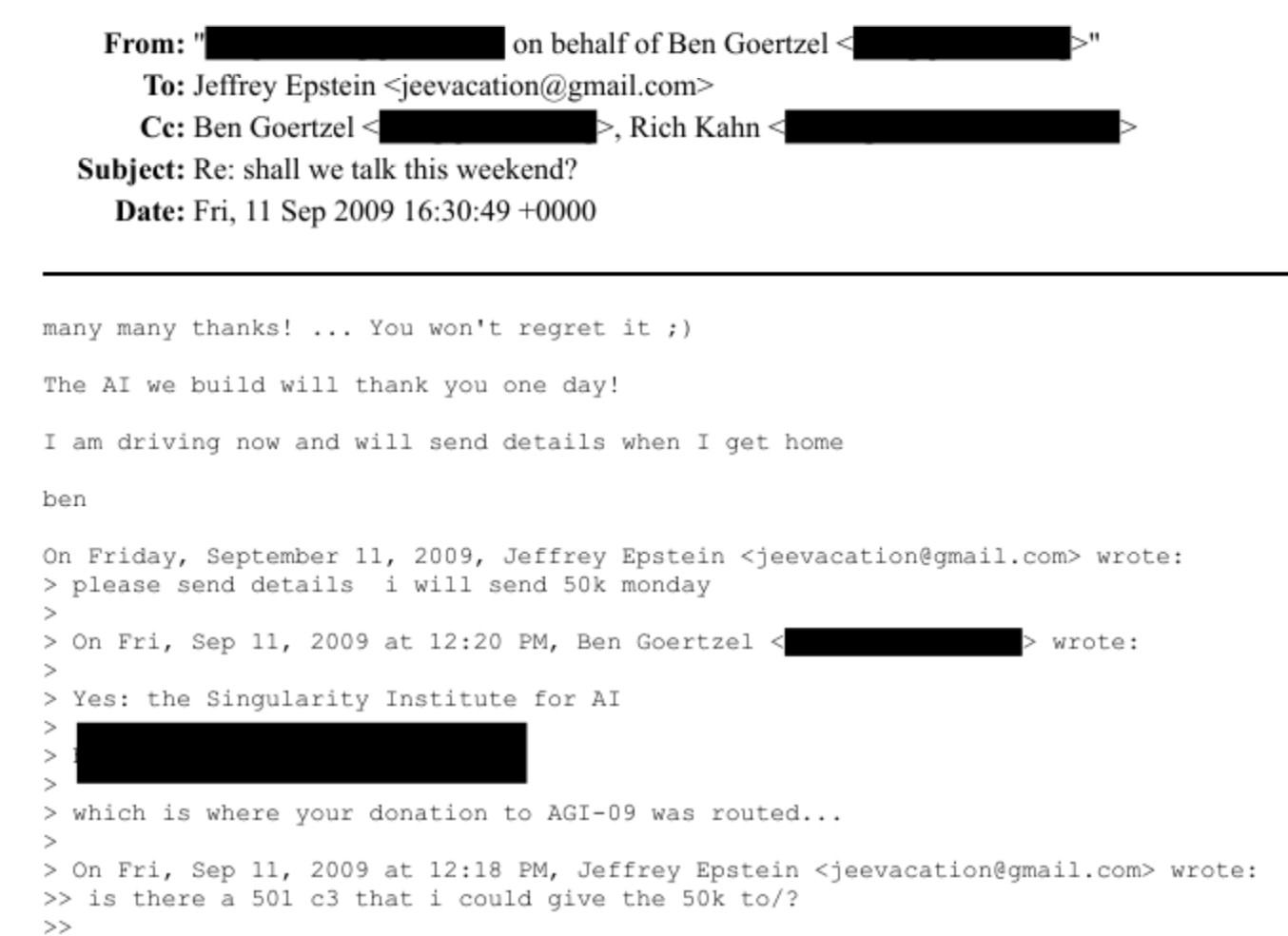

Perhaps nothing illustrates this more flagrantly than the recently released batch of Epstein emails that show his and his network’s obsession with immortality, “the singularity”, and other AI investments that would build up a new era of datafied eugenics. All evidence points to AI being funded and powered by the ultra-rich for their own pleasure and to the benefit of the manosphere. Just like there is no such thing as the “invisible hand of the market” that shapes and balances the needs and wants of the people, there is also no version of AI that is simply a repository of human knowledge to draw from as a commons. Instead, AI under fascist capitalism remakes the entire world into Epstein’s island.

From that perspective, it matters a lot less what tricks AI bots are able to accomplish, or what new gadgets come to market, or how you justify your own use of ChatGPT. None of that matters at all. We often turn to computer scientists and engineers to tell us what AI is or what the future will look like, when, in fact, what we are seeing is a massive social experiment conducted in plain sight by tech companies. This is where discussions of AI need to be. Specifically, we need critical scholars and activists who are able to make sense of AI as a cultural moment and as a political text. Because what we are witnessing is a collective psychosocial phenomenon that has more to do with humans than machines — more with whiteness and masculinity. All the machines do is make predictions at scale. This ability to predict words and narrate or depict them in human-like terms is impressive, no doubt, but what is much more interesting and difficult to understand is how deeply disturbed and enchanted some people have become with a relatively simple technological idea at scale. If humans aren’t able to process this illusion — like trauma — it overwhelms them.

Interestingly, if we flip this script, we could argue that trillion dollar investments into a largely unreliable or unproven prototype, like generative AI, is also a form of grand delusion — a different type of affliction of the rich and powerful that remains largely unaddressed because wealth and power are the main determinants of what get to be (and be endured), but also because they are surrounded by sycophants who validate every idea. AI is a bad idea. Because the rich control everything, and because those who oppose them stand to be humiliated, demoted, or destroyed, we tend not to name the follies of the powerful. We fail to diagnose a societal illness like what is happening right now with the global buy-in to AI. But let it be noted here: the delusions of the Oligarchs might at first manifest as infrastructure, but we will later have to analyse them as societal ruins. So, it might be easier to act now — to resist the data centre buildouts that usher in their worldviews — than to rebuild a society that has collapsed from a failed social experiment.

Notes

-

Jide Ehizele, “AI decline porn is a distortion of modern Britain”, UnHerd, 22 February 2026. [^]

-

Seemingly private and seemingly all-knowing. [^]

-

Marco Quiroz-Gutierrez, “‘Country of geniuses in a data center’: Every AI cluster will have the brainpower of 50 million Nobel Prize winners, Anthropic CEO says”, Fortune, 27 January 2026. [^]

-

You can (kind of) switch off this feature, but the experience is much less enjoyable. [^]

-

Consensus reality means something like debating and settling ideas over and over again. [^]

-

Georgia Wells, “OpenAI Employees Raised Alarms About Canada Shooting Suspect Months Ago [^]

-

Thomas McMullan, “What does the panopticon mean in the age of digital surveillance?”, The Guardian, 23 July 2015. [^]

-

Germain Gauthier, Roland Hodler, Philine Widmer & Ekaterina Zhuravskaya, “The political effects of X’s feed algorithm”, Nature, 2026. [^]

-

“WHO BROKE THE INTERNET? Understood”, CBC News, 18 August 2025. [^]

-

Abeba Birhane, “Bending the Arc of AI towards the Public Interest”, AI Accountability Lab, 17 February 2025. [^]

-

Businesses are now having to influence the data ingested for training AI chatbots so that their products can be part of the synthetic output (as a kind of product placement, or as a way to create a need for something). See: Erin Griffith, “Chatbots Are the New Influencers Brands Must Woo”, The New York Times, 17 February 2026. [^]

-

Boaz Sobrado, “Inside The ‘Clipping Farms’ Driving Fintech’s Marketing Boom”, Forbes, 11 February 2026. [^]

-

Targeted advertising and dynamic pricing are other parts of this evolution. [^]

-

José Marichal, You Must Become an Algorithmic Problem: Renegotiating the Socio-Technical Contract, 2025. [^]

-

If you’re in your 20s, you’ve been online your entire life and the internet has probably shaped you more than your friends, family or community have. If you’re in your 50s or older, your life has been divided between pre- and post-Internet, which is its own perspective: arguably the last of humankind to know offline life at all. [^]

-

“Decline porn explained, and why Clavicular is misunderstood”, BBC Top Comment, 20 February 2026. [^]

-

Jake Renzella and Vlada Rozova, “The ‘dead internet theory’ makes eerie claims about an AI-run web. The truth is more sinister”, The Conversation, 19 May 2024. [^]

-

Alina Snisarenko, “Ford tells students to not pick ‘basket-weaving courses’ in wake of OSAP cuts”, CBC News, 17 February 2026; Dan McQuillan, Resisting AI An Anti-fascist Approach to Artificial Intelligence, 2022; Tim Bousquet, “AI is fascism”, Halifax Examiner, 1 October 2025. [^]

-

This is a badly written piece written by a known “effective altruist” attempting (and failing) to take down critiques of AI: Dan Kagan-Kans, “The left is missing out on AI”, Transformer, 16 February 2026. [^]